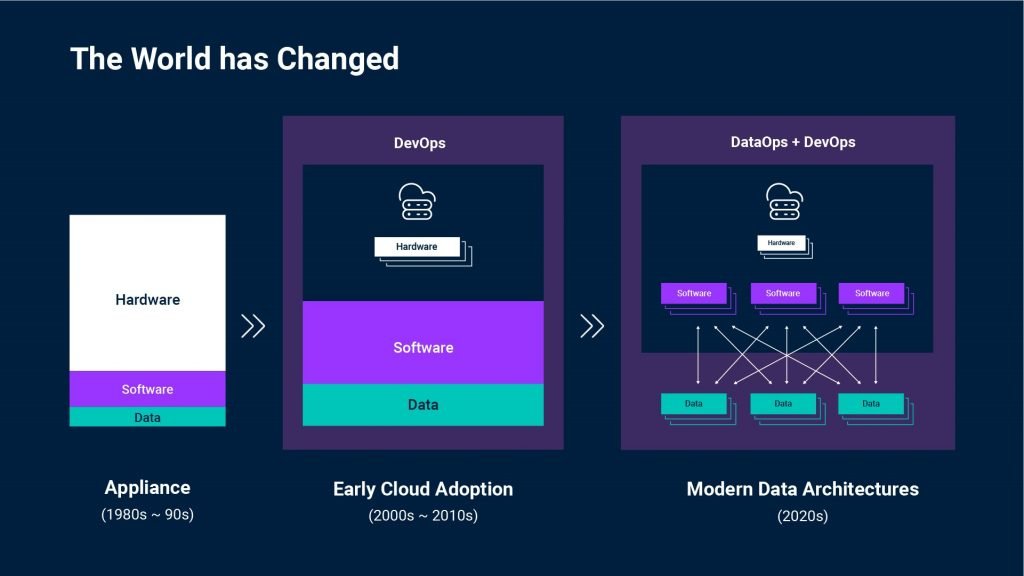

Over the past 20 years the relationship between hardware, software and data has changed dramatically. The consequences for your business have too. The simplicity of hardware, software, and a tiny sliver of data in the middle, contained within a single system in a large box, is unrecognizable by today’s paradigm.

Those changes began around 2000, as we entered the era of VMWare, hypervisors, and early cloud. Enterprises started focusing on software and the role of hardware started to shrink. While this gave rise to DevOps, software and data still existed together as a single unit.

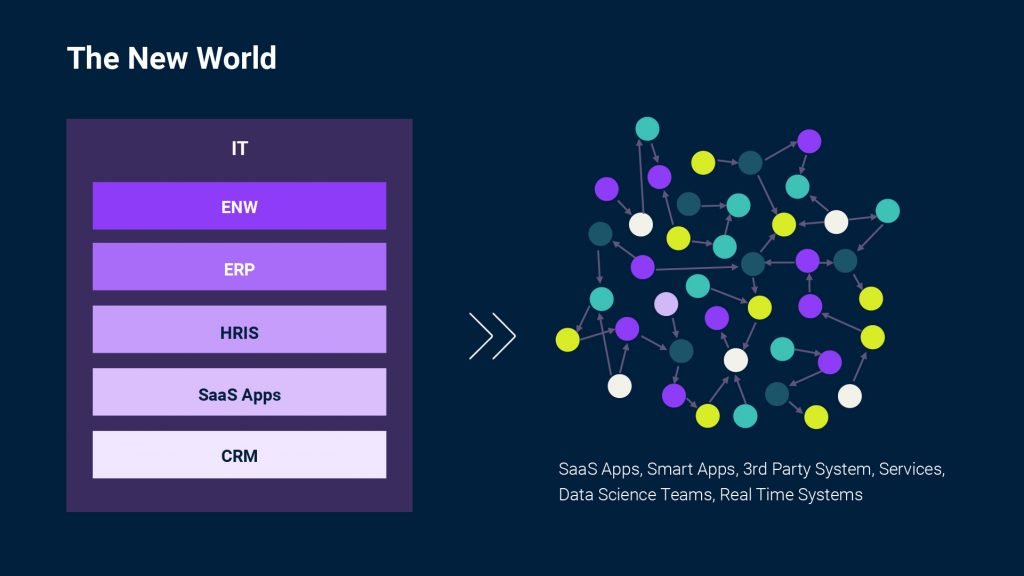

In the past 5 years, what started as an evolutionary change took a huge step. Today hardware is no longer at the forefront of any conversations. And the handful of big blobs of software that enterprises invested in? They have been atomized into hundreds of smaller, highly complex, agile applications, systems and even microservices in the cloud. This splintered application landscape recharged the integration market and drove massive adoption of iPaaS – Integration Platform-as-a-Service – to easily reconnect key workflows and processes.

But data! Data became the connective tissue ensuring hundreds of otherwise fragmented systems work together as an intelligent whole supporting various business processes. And DataOps—the set of practices and technologies to operationalize data management and integration—came onto the scene blazing.

One way to think about this new paradigm is that instead of sending 5 jumbo jets lumbering along predetermined flight paths, we now have fleets of 5000 drones. These can move in any direction freely, reorganize to act as one, split into groups as needed, and address any obstacles—anticipated or not—in their way. And all on-the-fly.

That is, if you can connect them. Can you?

The New World: StreamSets gets the data to applications, powered by webMethods, across complex data pipelines.

Data Drift and the Single Experience

Part of this new reality is that all these data, and all these systems, are continuously moving in unpredictable ways. “Data drift” is when these unexpected and undocumented changes break processes and corrupt data. It can be as simple as the transition from a 10-digit to 12-digit ID cascading through tens of thousands of applications, or a changed IP address format disrupting data going to a dashboard and going undetected for weeks or months.

But data drift can also reveal new opportunities. Like drifting with data to make sure nothing breaks on either end—so you can speed up innovation. That’s what StreamSets does.

StreamSets, like webMethods, isn’t hardwired into the systems it connects. So whether data needs to be in Amazon Redshift, Snowflake, Google BigQuery or any other system, StreamSets can infer schemas dynamically at runtime and drift without breaking as a schema changes. Such smart data pipelines are the self-healing connective tissue that gets data where it is needed.That means if StreamSets encounters a situation where yesterday there were 24 data fields, and today there are 30, and they are in a different order—it’s not a problem.

But this is still only half the story: interpreting and taking action is where data makes a real difference. This is where application integration steps in – with event-driven, automated workflows and orchestration to transform and activate the data. Adding a powerful integration platform means your data provides better visibility and insights, real-time engagement with customers, better processes and easier transactions.

webMethods + StreamSets = Better Integration, Faster Innovation

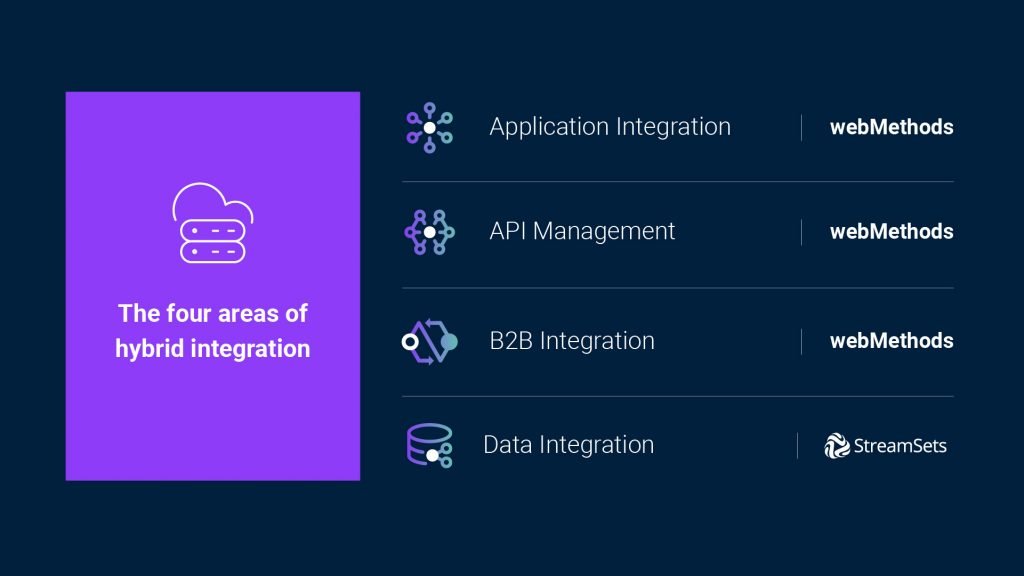

In the case of webMethods and StreamSets, 1 + 1 really is greater than 2. The fragmentation of data and apps—at enterprise scale and with advanced security—is finally being addressed by a single vendor in the hybrid integration space covering data, apps, B2B and APIs.

A good use case for this power is a business using IoT with a continuously flowing, complex maelstrom of data coming in from its devices. In many cases, those devices aren’t sending single data points, but eddying streams consisting of temperature, runtime, rotational speed, vibrational delta tolerances and many more complex machinery calculations. StreamSets opens continuous data pipelines for seamlessly ingesting and analyzing the data in a cloud data warehouse. The intelligence and insights from the analysis can be leveraged to update applications for device maintenance, technician dispatch, automated resets and more with webMethods.io driving the business logic to close the loop.

So when something is about to fail, you already know. Replacements have been ordered, maintenance already scheduled, suppliers notified, and data captured for a cascade of analysis to update everything from products to processes—automatically without any downtime at all.

Welcome to the Convergence

Applications, APIs, data and microservices are the digital backbone that make it possible to run the enterprise. But that backbone needs smart data pipelines—the connective tissue—to let all those independent systems work together and keep data-in-motion safe, secure, resilient, and more importantly – actionable.

For the first time, all these technologies are unified on a single platform. That’s the end of piecemeal solutions from multiple vendors: Instead, the combination of data integration, application integration, B2B integration and API management, in a complete hybrid integration platform is needed as the market shifts to the cloud.

So if you want to gain new business insights from data and continuously drive what you’ve learned back to applications and APIs to innovate faster—an even stronger “we” are here to help.