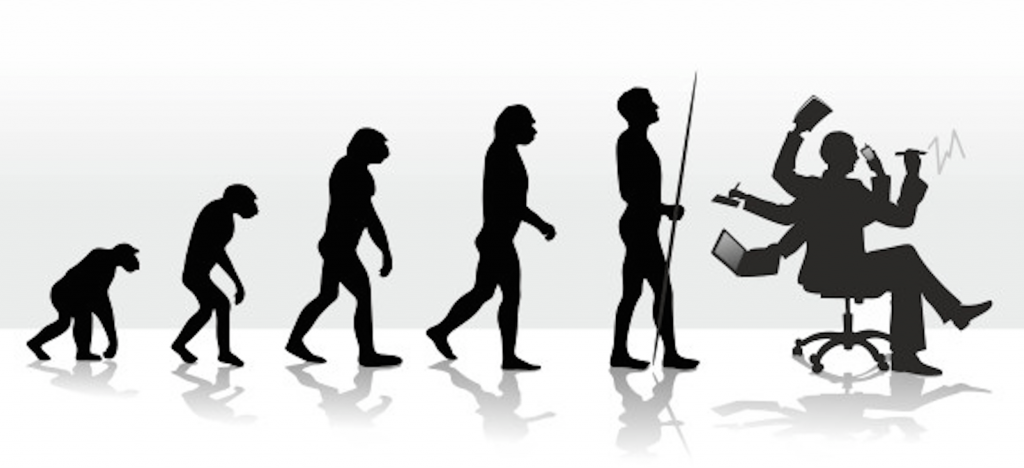

Today we hear a lot about streaming data, fast data, and data in motion. But the truth is that we have always needed ways to move our data. Historically, the industry has been pretty inventive about getting this done. From the early days of data warehousing and extract, transform, and load (ETL) to now, we have continued to adapt and create new data movement methods, even as the characteristics of the data and data processing architectures have dramatically changed.

Today we hear a lot about streaming data, fast data, and data in motion. But the truth is that we have always needed ways to move our data. Historically, the industry has been pretty inventive about getting this done. From the early days of data warehousing and extract, transform, and load (ETL) to now, we have continued to adapt and create new data movement methods, even as the characteristics of the data and data processing architectures have dramatically changed.

Exerting firm control over data in motion is a critical competency which has become core to modern data integration and operations. Based on more than 20 years in enterprise data, here is my take on the past, present and future of data in motion.

First Generation: Stocking the Data Warehouse via ETL

Let’s roll back a couple decades. The first substantial data movement problems emerged in the mid-1990s with the enterprise data warehouse (EDW) trend. The goal was to move transaction data provided by disparate applications or residing in heterogeneous databases into a single location for analytical use by business units. Organizations operated a variety of applications, such as SAP, PeopleSoft and Siebel, as well as a variety of database technologies like Oracle, Sybase and IBM. As a result, there was no simple way to access and move data; each was a bespoke project requiring an understanding of vendor-specific schema and languages. The inability to “stock the data warehouse” efficiently led to EDW projects failing or becoming excessively expensive.

ETL emerged as the tooling to successfully load the warehouse by creating connectors for applications and databases. I refer to this first generation as “schema-driven ETL” because for each source one needed to specify and map every incoming field into the data warehouse. It was developer-centric, focused on pre-processing (aggregating and blending) data at scale from multiple sources to create a uniform data set, primarily for business intelligence (BI) consumption. Enterprises spent millions of dollars on these first-generation tools that allowed developers to move data without dealing with the myriad languages of custom applications, fueling the creation of a multi-billion dollar industry.

Second Generation: SaaS leads to iPaaS

Over time, consolidation of the database and application markets into a small number of mega-vendors created a more homogeneous world. Organizations began to wonder if ETL was even relevant, since the new world order had done away with the fragmentation that spawned its existence.

But a new challenge replaced the old. By the mid-2000s, the emergence of SaaS applications, led by Salesforce.com, added another layer of complexity. The new questions were:

- How do we get cloud-based SaaS transaction data into warehouses?

- How do we synchronize information across multiple SaaS applications?

- Should we deploy data integration middleware in the cloud, on-premise or both?

As the SaaS delivery model proliferated, customer, product and other domain data became fragmented across dozens of different applications, usually with inconsistent data structures. Because SaaS applications are API-driven rather than language-driven, organizations faced a new challenge of rationalizing across the different flavors of APIs needed to send data between these various locations.

The SaaS revolution forced data movement technologies to evolve from analytic data integration, the sweet spot for data warehouses and ETL, to operational data integration, featuring data movement between applications. The increased focus on operational use increased the pressure on the system to deliver trustworthy data quickly.

This new challenge led to the emergence of integration Platform-as-a-Service (iPaas) as the second generation of tools for data in motion. These systems were provided by both legacy ETL vendors like Informatica and newcomers like Mulesoft and SnapLogic. They featured myriad API-based connectors, a focus on data quality and master data management capabilities and the ability to subscribe to data in motion as a cloud-based service. But some of the old characteristics of ETL systems were retained, in particular a reliance on schema mapping and a focus on “citizen integrator” productivity, with less attention paid to the challenges of streaming data and continuous operations.

This second generation continues to contribute to the rapid growth of a multi-billion dollar industry.

The Need for a Third Generation

Of course, these first two generations were architected before the emergence of the Hadoop ecosystem and the big data revolution, which we are in the midst of today. Big data adds several new dimensions to the data movement problem:

- Data drift from new sources, including log files, IoT sensor output, and clickstream data. Data drift occurs when these sources undergo unexpected mutations to their schema and/or semantics, usually as a result of an upgrade to the source system. Data drift, if not detected and dealt with, leads to data loss and corrosion which, in turn, pollutes downstream analysis and jeopardizes data-driven decisions.

- The emergence of streaming interaction data – think clickstreams or social network activity – that must be classified as “events” and processed quickly. This is a higher order of complexity than transactional data, and these events tend to be highly perishable, requiring analysis as close as possible to event occurrence.

- Data processing infrastructure that has become heterogeneous and complex. The big data stack is based on myriad open source projects, proprietary tools and cloud services. It chains together numerous components from data acquisition to complex flows that cross various systems like message queues, distributed key-value stores, storage/compute platforms and analytic frameworks. Most of these systems fall under different administrative and operational governance zones, which leads to a very complex maintenance schedule, multiple upgrade paths and more.

This combination of factors breaks data movement systems, which were built for the needs of previous generations and the way data in motion was handled before. They end up being too tightly coupled, too opaque and too brittle to thrive in the big data world.

Legacy data movement systems are tightly coupled in that they rely on knowledge of specific characteristics of the data sources and processing components they connect. This was reasonable in an era where the data infrastructure was upgraded infrequently and in concert – the so called (albeit painful) “galactic upgrade”. Today, a tightly coupled approach hampers agility by stopping the enterprise from taking advantage of new functionality and performance improvements to independent infrastructure components.

Opaqueness comes from the developer-centric approach of earlier solutions. Because the standard use case was batch movement of “slow data” from highly stable and well-governed sources, runtime visibility was not a high priority. Consuming real-time interaction data is an entirely new ballgame which requires continuous operational visibility.

Brittleness comes from the fact that schema is no longer static and, in some cases, does not exist. Processes built using schema-centric systems cannot be easily reworked in the face of data drift and tend to break unexpectedly. When combined with tight coupling and operational opaqueness, brittleness can lead to data quality issues – delivering both false positives and false negatives to consuming applications.

Third Generation: Data in Motion Middleware

To address these new challenges, enterprises need a third generation data in motion technology, middleware that can “performance manage” the flow of data by continuously monitoring and measuring the accuracy and availability of data as it makes it’s way from origin to destination.

Such a technology should have the following qualities:

It should be intent-driven rather than schema-driven. At design time only a minimally required set of conditions should be defined, not the entire schema. In a world of data drift, minimizing specification reduces the chance of data flows breaking and data loss.

It should detect and address data drift during operations, replacing brittleness and opaqueness with flexibility and visibility. Streaming data requires complete operational control over the data flow so that quality issues can be quickly detected and corrected, either automatically or through alerts and proactive remediation. A set-and-forget approach that forces reactive operations is simply insufficient.

Because modern data processing environments are more heterogeneous and dynamic, a third-generation solution must work as loosely coupled middleware, where components (origins, processors, destinations) are logically isolated and thus can be upgraded or swapped out independently of one another and the middleware itself. Besides affording the enterprise greater architectural agility, loose coupling also reduces risk of technology lock-in to take advantage of innovation in cloud data platforms.

From Classical to Jazz | The New World of Data in Motion

To employ music as a metaphor, if the move from ETL to iPaaS was like adding instruments to an orchestra, the evolution from iPaaS to Data Platform Management is akin to moving from classical music to improvisational jazz. The first transition changed old instruments for new, but you were still reading sheet music. The transition we now face throws out the sheet music and asks the band to make continual, subtle, and unexpected shifts in the composition while maintaining the rhythm.

In the new world of drifting data, real-time requirements and complex and evolving cloud infrastructure, we must embrace a jazz-like approach, channel our inner Miles Davis, and shift to a third-generation mindset. Even though modern sources and data flows are dynamic and chaotic, they can be can still be blended to deliver beautiful insights.