UPDATE – Salesforce origin and destination stages, as well as a destination for Salesforce Wave Analytics, were released in StreamSets Data Collector 2.2.0.0. Use the supported, shipping Salesforce stages rather than the unsupported code mentioned below!

UPDATE – Salesforce origin and destination stages, as well as a destination for Salesforce Wave Analytics, were released in StreamSets Data Collector 2.2.0.0. Use the supported, shipping Salesforce stages rather than the unsupported code mentioned below!

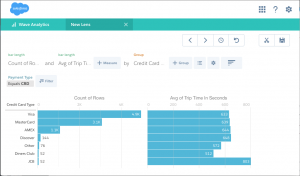

In my last blog entry I explained how you can write custom destinations to send data to systems not currently supported by StreamSets Data Collector. As you might know, my last gig was as a developer evangelist at Salesforce, so I put my experience to work writing a destination for Salesforce Wave Analytics. Now you can ingest data from any of a variety of origins, operate on it in a StreamSets pipeline, and write the results into a Wave dataset. Once uploaded, you can combine the dataset with CRM and other data in Salesforce for analysis.

This video shows the destination in action, writing NYC taxi transaction data to Wave:

Technical Notes

As you probably know, StreamSets Data Collector ingests data continuously, but Wave is more batch-oriented. You can use Wave’s External Data API to create datasets, overwrite them, but not append to them. As a result, the destination code uploads data as a series of datasets and uses a dataflow to combine them into a single aggregated result.

The destination code and tarball are on GitHub; feel free to download and try it out. Note that it is in an early stage of development. There may well be bugs – please report any issues on GitHub. Note also that the destination code uses internal Salesforce APIs (as also used by Analytics Cloud DatasetUtils) to manage dataflow, and will require updating when these are publicly released.