Before we get to building your custom JSON validator, let’s talk about the author and their thoughts on why JSON has become so essential in data engineering.

The author, Joel Klo, is a consultant at Bigspark, UK’s engineering powerhouse, delivering next level data platforms and solutions to their clients. Their clients use modern cloud platforms and open source technologies, prioritize security, risk, and resilience, and leverage distributed and containerized workloads.

Introduction

The JavaScript Object Notation, popularly referred to by its acronym JSON, has become the most used format for the transfer of data on the web, especially with the advent and dominance of the Representational State Transfer (REST) software architectural style for designing and building web services.

Its popularity (and flexibility) have also made it one of the most common formats for file storage. It has inspired and become the choice for structuring or representing data in NoSQL Document stores like MongoDB.

From capturing user input to transferring data to and from web services and source systems or data stores, the JSON format has become essential. Its increased dependence, therefore, makes its validation all the more important.

This blog post explores the features of a custom StreamSets Data Collector Engine (SDC) processor built to validate JSON data with the help of an associated schema and how it can be used in a StreamSets pipeline.

The JSON Schema

A JSON Schema is a JSON document structured in a way to describe (or annotate) and validate other JSON documents. It is more or less the blueprint of a JSON document. The JSON Schema is a specification currently available on the Internet Engineering Task Force (IETF) main site as an Internet-Draft. The spec is also presented as the definition for the media type “application/schema+json”.

The validation vocabulary of the JSON Schema is captured in the JSON Schema Validation specification Internet-Draft.

Below is an example JSON document with an associated example schema.

Example JSON Document

1{

2 "name": "John Doe",

3 "age": 35,

4 "email": "johndoe@example.com",

5 "hobbies": [

6 "reading",

7 "cycling",

8 "swimming"

9 ],

10 "phone": "+441234567890"

11}

Example JSON Schema

1{

2 "$schema": "http://json-schema.org/draft-07/schema#",

3 "title": "User schema",

4 "description": "A user data record",

5 "type": "object",

6 "properties": {

7 "name": {

8 "description": "The full name of a user",

9 "type": "string"

10 },

11 "email": {

12 "description": "A user's email address",

13 "type": "email"

14 },

15 "age": {

16 "type": "number",

17 "minimum": 18

18 }

19 },

20 "required": [

21 "name", "age", "email", "phone"

22 ]

23 }

The JSON Schema Validator Library

The JSON Schema Validator library is a Java library that uses the JSON schema specification to validate JSON data. It relies on the org.json or JSON-Java API, enabling the creation, manipulation, and parsing of JSON data. This is the main library used in the building of the custom StreamSets Data Collector Engine processor.

For a tutorial on how to build a custom StreamSets processor, click here.

Note: Be sure to specify your StreamSets Data Collector Engine version in the archetypeVersion maven argument when using the provided maven archetype to generate the custom stage project.

The Custom StreamSets Data Collector JSON Validator Processor

Installation

The JSON Validator Processor binary is available as a .zip file here. The zip file must be extracted in the StreamSets Data Collector Engine user-libs directory, after which it can be seen in the SDC stage library on restart.

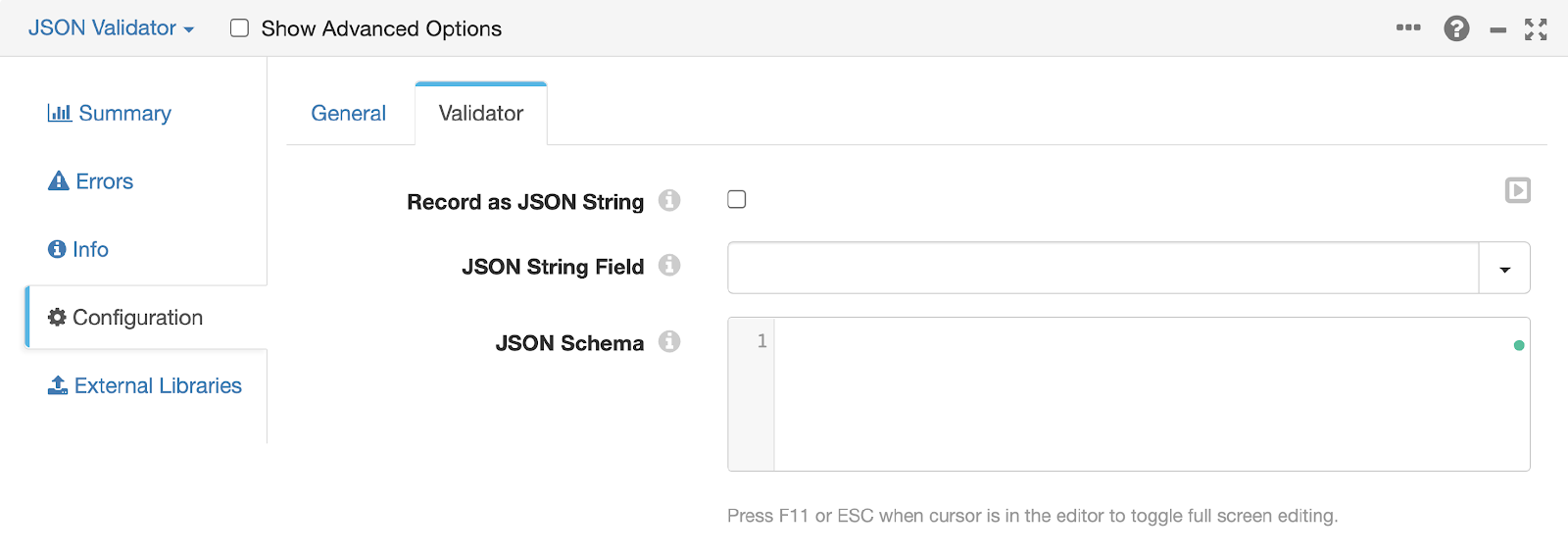

Configuration of Properties in Your JSON Validator Processor

To use the JSON Validator Processor in a pipeline, there are two of three configuration properties that must be specified:

- The Record as JSON String config

- If checked, this config option converts the full SDC record into the JSON string which will be validated. This allows all data formats which can be sourced by a data collector origin stage to be validated by the JSON schema (even though they are not JSON documents).

- If unchecked, the processor will perform the validation on the field specified by the JSON String Field config option.

- The JSON String Field config: this represents the field from the incoming SDC record that contains the ‘stringified’ JSON data which needs to be validated.

- If the specified field contains an invalid JSON object string, an exception will be thrown on pipeline validation.

- The JSON Schema config: this allows the user to define the draft-04, draft-06 or draft-07 JSON schema that will be used to validate the JSON data captured by the JSON String Field or SDC record.

- An exception will be thrown on pipeline validation if the schema is an invalid JSON object or does not conform to the specified schema version.

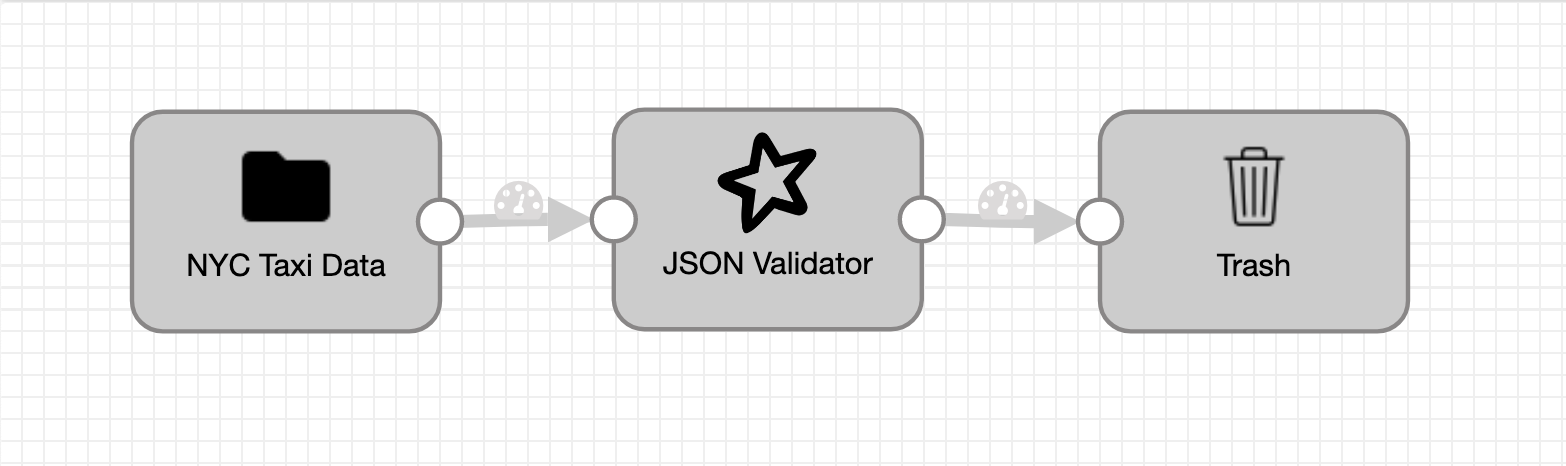

Example Pipeline Using the JSON Validator

To demonstrate the capability of the JSON Validator Processor, a basic 3 stage pipeline consisting of a single origin, processor, and destination will be used:

- origin stage (Directory): containing a .csv file of NYC taxi data,

- processor stage (the JSON Validator) and

- destination stage (Trash)

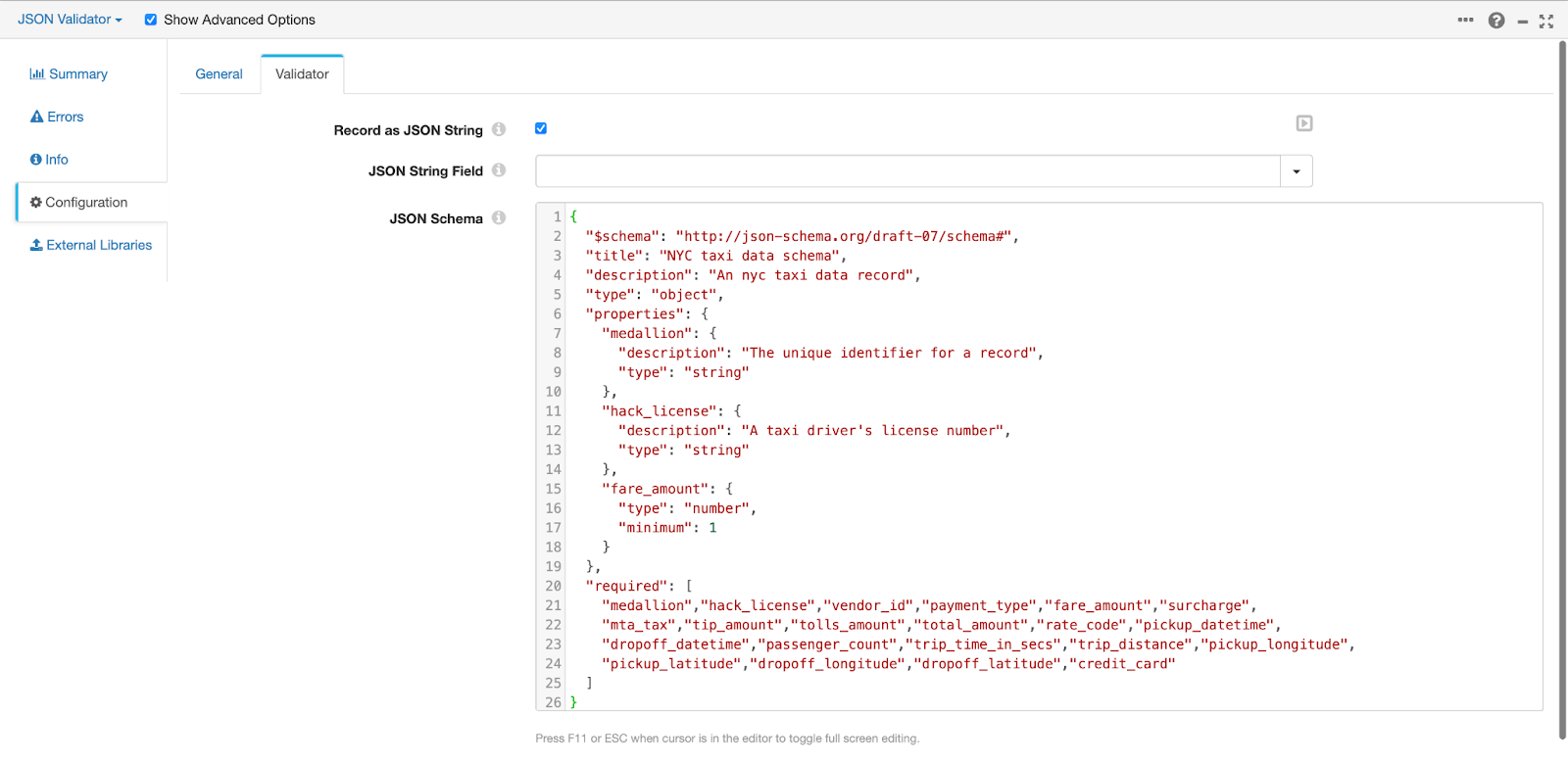

The JSON Validator in this example pipeline is configured as follows:

On validation of the pipeline:

- the JSON schema is asserted to

- be a valid JSON object

- to conform to the specified $schema (i.e. our schema is validated with the draft-07 schema specification in this case)

In our JSON schema, we specify a rule which expects the fare_amount field of our NYC taxi data to be a number not less than one. We, however, expect our JSON Validator processor to produce an error for each record when we preview or run the pipeline, as all the fields in our NYC taxi data coming from our Directory origin are strings.

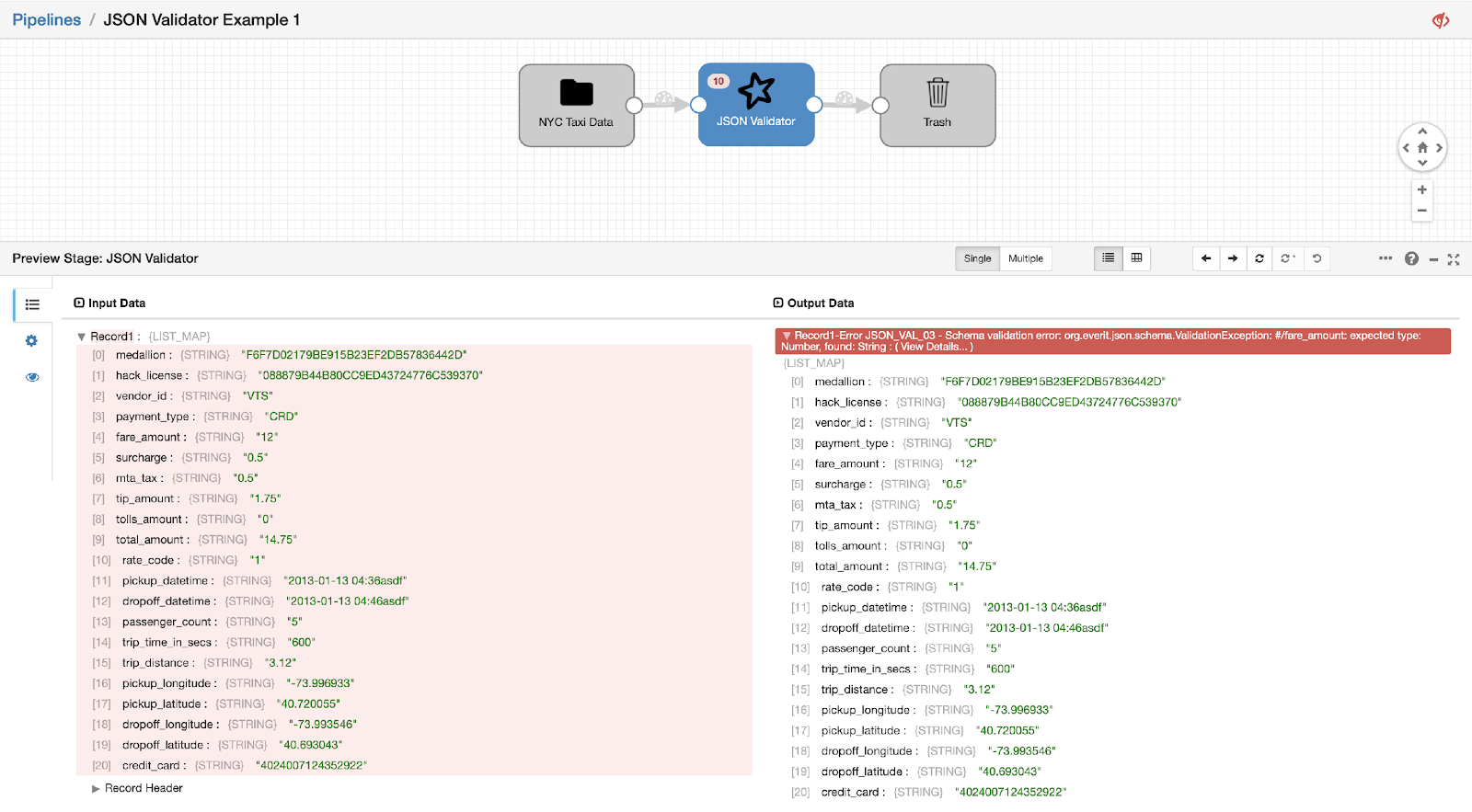

Let’s preview our pipeline…

As expected, we receive a Schema validation error stating that the expected type for the fare_amount field is a Number but the actual type is a String.

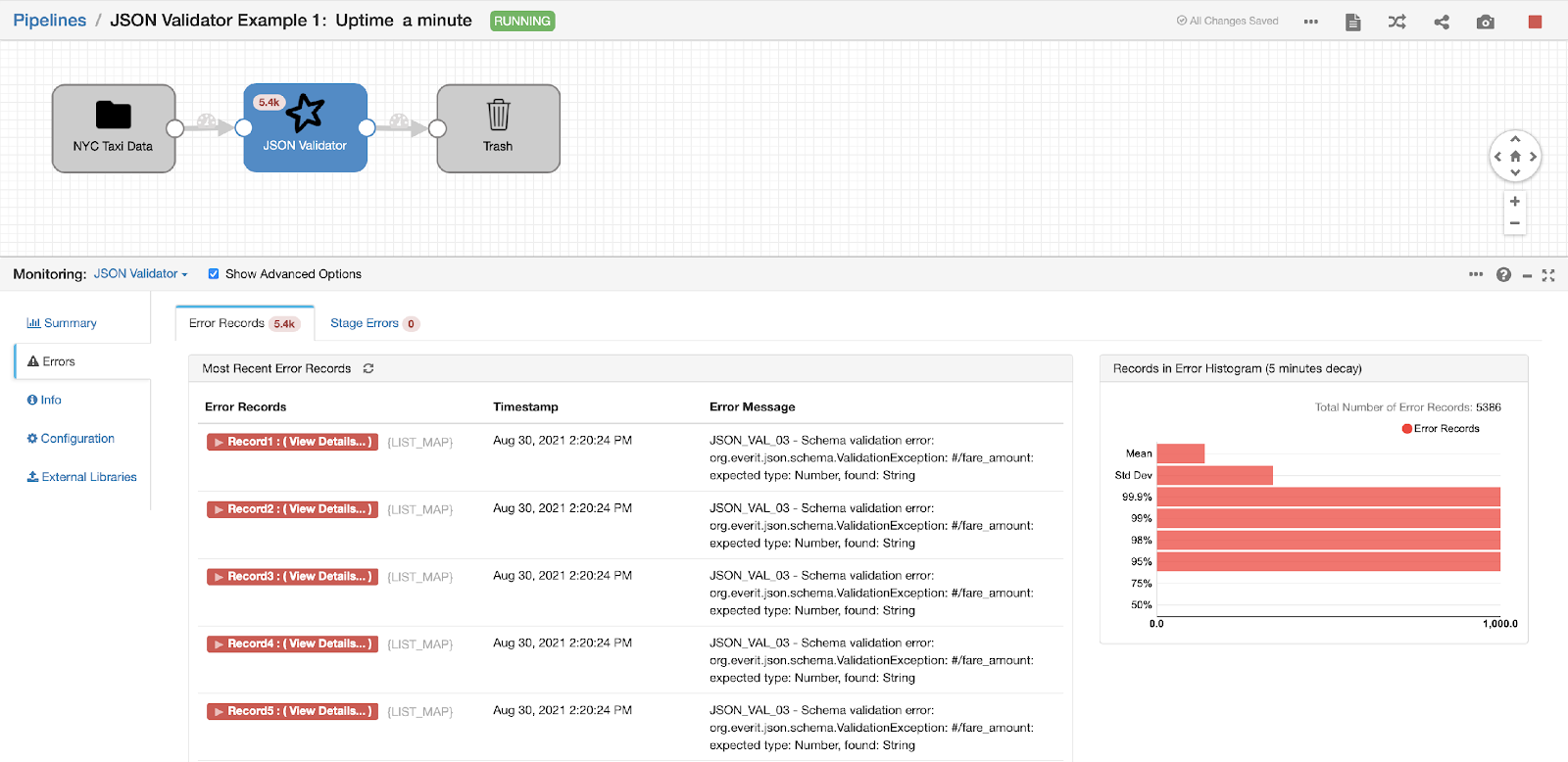

Running the pipeline…

On monitoring the JSON Validator processor, we can view the error metrics and record details from the Errors tab. From this, we observe that all 5386 records of our NYC taxi data have validation errors according to our specified JSON schema (as expected).

Closing Thoughts

In a world where a number presented as text, a digit stripped of a number, a boolean flag displayed as an integer (1 or 0), a duplicated item in a list, a special character missing from a string of text, or any one of the million things that could go wrong with data and its representation could cost individuals and businesses lots of money, a tool like the JSON Validator Processor and the role it plays in a strategic platform like StreamSets comes in handy.

Have any ideas on how you can use the JSON Validator Processor in your own pipelines? Or, how the JSON Validator can be improved? Join us in our Community to share your best practices.