Data that’s in flight is perceived to be more challenging to work with than “landed” data sitting quietly in some storage platform. With the high volume, and variety of data constantly streaming in from IoT sensors, you need a holistic DataOps approach that goes beyond traditional, stationary transactional data. The StreamSets DataOps platform provides an end-to-end event stream processing system for streaming IoT data that turns raw data into useful information in real-time. A couple of benefits when using StreamSets to analyze IoT data are:

- Allow IoT data to be managed and analyzed in real-time. As large amounts of data rapidly stream into StreamSets it cleanses, normalizes, and aggregates data immediately.

- Filter data in real-time by performing analysis to determine whether a specific event is relevant. For example, if the temperature of a sensor is constant, we may not want to store the same data again. If the temperature varies, then this becomes relevant and gets propagated for further action.

- Automatically identify and alert on changes in upcoming data streams, whether it’s their structure, semantic values or driven by infrastructure changes.

- Deliver additional value by analyzing IoT data in multiple phases with a microservice architecture.

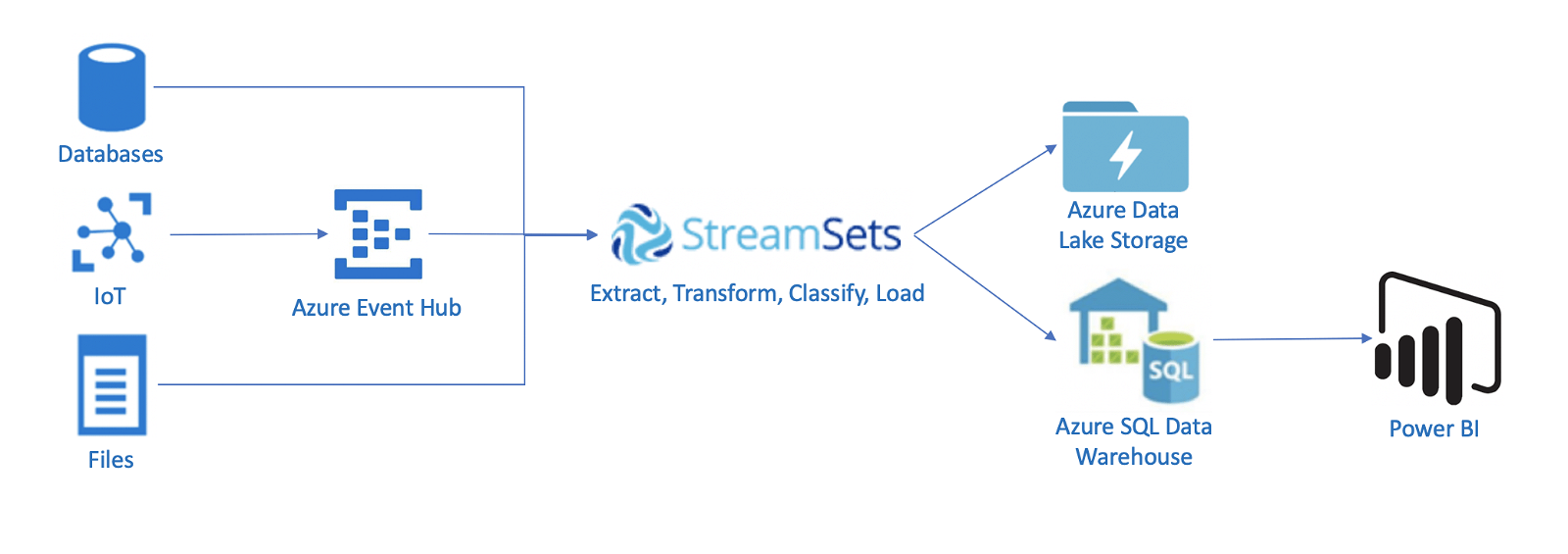

Pipeline to analyze sensor readings

Consider a use case where sensor readings are generated and sent over Azure IoT Hub from a device. In StreamSets…

- The sensor readings are fetched using the Azure Event Hub origin.

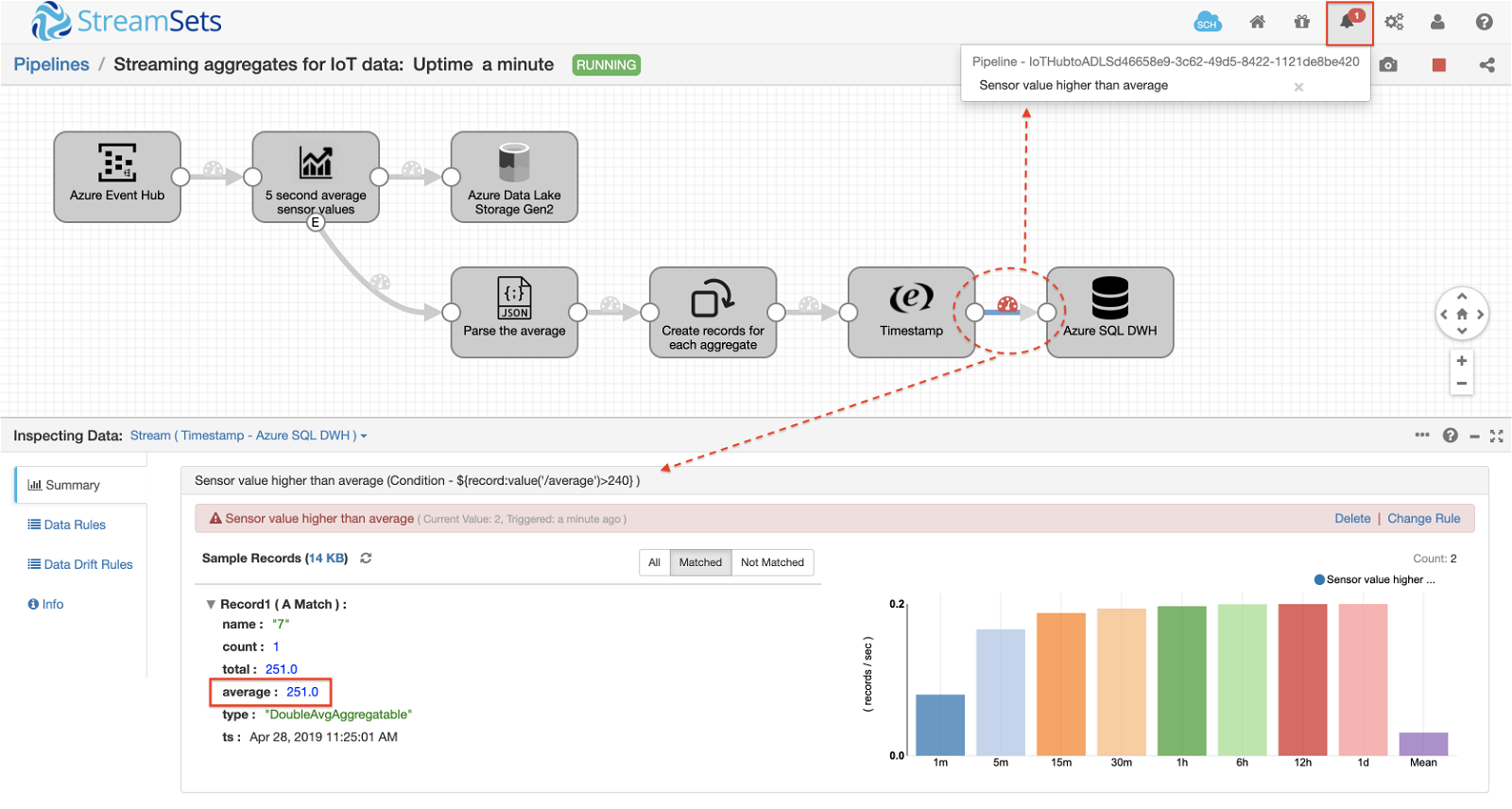

- We then analyze this data that is continuously flowing to calculate the average sensor value. For this, we use the Windowing Aggregator Processor which allows multiple types of aggregations for rolling and sliding windows. The aggregator is configured to calculate the average sensor value over a 5 second rolling window.

- In an ideal state, we should not expect drastic changes in the sensor values over a period of time. However, if we do detect a change in the sensor readings within a window of time, we must act immediately. To detect this anomaly, we configure the windowing aggregator to calculate the average sensor value over a 5 second period. (You can adjust this as per your requirement).

- If the average value per sensor calculated by the Windowing Aggregator is above a normal threshold, an alert is triggered via a webhook.

The pipeline will then send all aggregates to Azure SQL Data Warehouse and simultaneously archive all the sensor readings into Azure Data Lake Storage Gen2.

A running pipeline is shown below:

Detecting Anomalies

When the average reading is above the normal threshold, a Data Rule is created to send an alert. In this pipeline, I’ve set the Data Rule to trigger an alert via a webhook when the average sensor reading is above 240. The screenshot below shows the alert being triggered along with a sample record with its reading.

Demo Video

Summary

With real-time sensor readings coming through Azure Event Hub, StreamSets provides a really easy way to analyze data in real-time and immediately send out alerts as required when anomalies are detected. Get started with StreamSets Data Collector by directly instantiating it from Azure Marketplace. To learn more about StreamSets for IoT, visit our website.