In the fast-paced world of data-driven decision-making, organizations are increasingly adopting modern data integration tools like StreamSets Transformer for Snowflake to realize the full potential of their data within Snowflake. However, while empowering employees with data access is essential for agility, it raises concerns about data governance and the potential for shadow IT.

In this blog post, we will explore best practices for utilizing Transformer for Snowflake with Snowflake to balance empowering employees and maintaining governance, thus ensuring data reliability and security, and minimizing shadow IT occurrences.

Creating an Analytics Sandbox

A crucial first step is establishing an analytics database within Snowflake. Here, every employee is granted their own schema, effectively providing them with a sandbox environment to explore data and find answers to their unique questions rapidly. This approach encourages a data-driven culture and empowers employees to make informed decisions, boosting productivity and innovation. However, it’s important to note that these sandbox environments should absolutely not be considered production environments, both to avoid confusion between the two and to ensure data quality and availability for the production environment.

Limiting Write Access to Production Databases

To ensure data integrity and avoid shadow IT concerns, restrict write access to the production and analytics databases inside Snowflake to only the database owner, central IT, or data engineering teams. This approach only fosters trust in data reliability and also ensures that data is managed by professionals who understand the nuances of data governance, security, and quality. By implementing this measure, the rest of the organization knows precisely how much trust to place in the data they access, distinguishing between data that may require further verification and data that can be considered rock-solid.

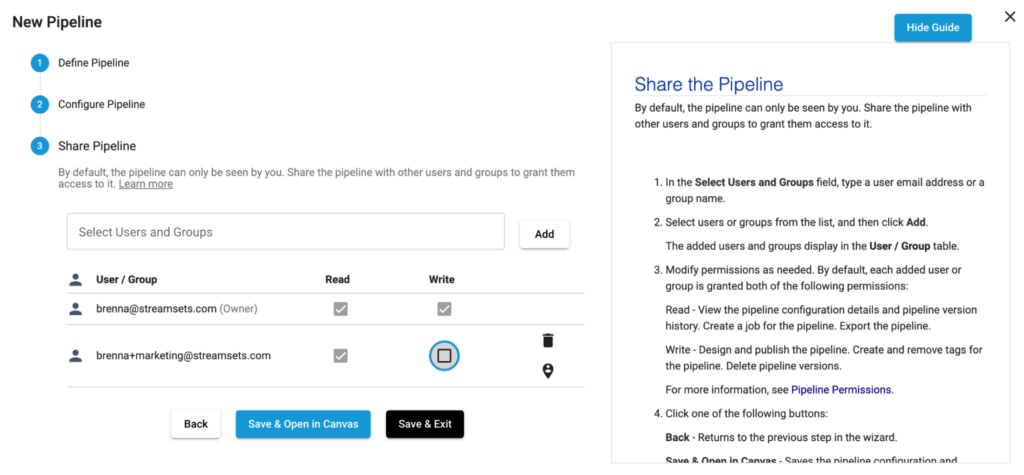

StreamSets Transformer for Snowflake can help limit access to production with role-based access control on pipelines and fragments.

Promoting Data Awareness and Education

Educating employees on data awareness and best practices is critical to the process. By imparting knowledge about data sources, transformations, and potential pitfalls, the organization can prevent data misinterpretation and discourage ad-hoc data manipulation, which might lead to shadow IT scenarios. With a clear understanding of data governance, employees can confidently leverage the available data while adhering to established guidelines and protocols.

Implementing Data Quality Checks

Leverage the power of StreamSets Transformer and Snowflake to set up robust data quality checks. Configuring data pipelines to validate and cleanse incoming data allows potential inaccuracies and inconsistencies to be flagged and addressed proactively. This not only ensures the reliability of data within the analytics database but also fosters trust in the overall data ecosystem.

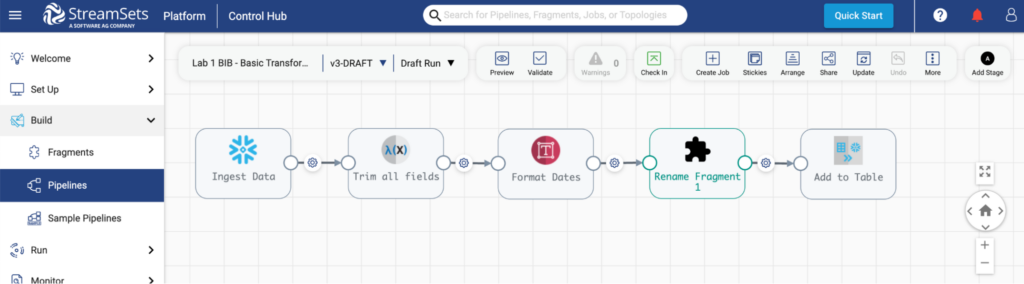

For example, look at this Transformer for Snowflake pipeline (above) that uses a reusable pipeline fragment to apply data quality rules to data flowing through this pipeline. A fragment like this can be shared across an organization and applied as often as necessary to enforce and control data quality.

Harness the Full Potential of Data

StreamSets Transformer for Snowflake offers a powerful data integration solution that lets organizations harness the full potential of their data while avoiding the pitfalls of shadow IT. Companies can strike the perfect balance between data agility and reliability by creating an analytics database that encourages employee exploration, coupled with the governance required for production and analytics databases. By adopting best practices, promoting data awareness, and implementing data quality checks, organizations can create a data-driven culture that enables innovation while safeguarding data integrity and trust.