Modern Analytics and Applications Consume Data Constantly

Digital transformation has become a top concern for business leaders. No matter your industry, your business is fast becoming a data business, and real-time applications, embedded with the smarts of streaming analytics, are the face of change.

Streaming data helps make the perfect offer to a loyal customer, alerts a mechanic to an issue before failure, or detects a cyber threat before damage is done. Predictive analytics and real-time decision making need a constant supply of fresh data. Yesterday’s data is historical data. Combine it with right-now data for insightful action.

The StreamSets Data Integration Platform Advantage

StreamSets makes it easy to build pipelines that capture event-based data for streaming analytics and process it in-flight to fuel your real-time applications.

Flexible Hybrid and Multi-cloud Architecture

Easily migrate your work to any data platform or cloud infrastructure.

How It Works

Rapid Ingestion

Designed for streaming data, StreamSets Platform supports the design patterns and execution engines you need to get the freshest data from all your sources into your real-time applications and event-driven architectures (EDA).

- Streaming data ingestion

- Edge data shipping

- Time series data

- Real-time APIs

Transformation in Flight

Read out of any system for real time and apply lightweight transformations to power streaming analytics in flight. Call out to machine learning services or model on the fly to classify data as it streams.

Operationalize and Scale

StreamSets Data Platform gives you one place to monitor and manage all your pipelines, with second-by-second visibility into data flows so you can operate your event-driven architectures with confidence. Data performance SLAs and security policies enforced at run-time ensure the safety and reliability of your data as it flows into your real-time applications.

Awards and Recognition

Frequently Asked Questions

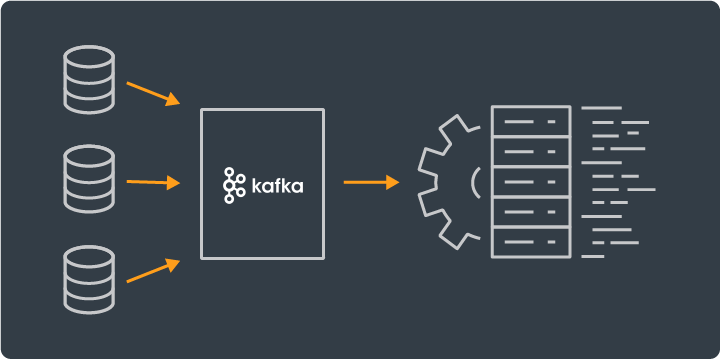

What is Kafka?

Apache Kafka is an open-source distributed event streaming platform (also known as a “pub/sub” messaging system) that brokers communication between bare-metal servers, virtual machines, and cloud-native services. StreamSets supports Kafka as a source, broker, and destination allowing you to build complex Kafka pipelines with message brokering at every stage.

What is the difference between Kafka and Kafka Streams?

Kafka is the data streaming platform, while Kafka Streams is a client library for building applications and microservices, where the input and output data are stored in Kafka cluster. It combines the simplicity of writing and deploying standard Java and Scala applications on the client side with the benefits of Kafka's server-side cluster technology.