Last week, a sizable group of professionals ascended on Pier 27 in San Francisco for the 3rd Annual Kafka Summit. StreamSets was excited to support the event, especially because of the focus our customers have on Kafka as a critical mechanism for delivering on real-time capabilities. Key themes for this year’s summit included Microservices, Kubernetes, and the importance of a DataOps mindset.

Apache Kafka is a distributed streaming platform that reads and writes streaming data like a messaging system and enables developers to build real-time applications. While enterprise adoption of Apache Kafka has grown over the past couple years (as evidenced by keynotes from leaders at Capital One, Slack, and Microsoft), the overall trend towards utilization of streaming data seems to be the overarching narrative at this conference.

In general, developers want to build streaming applications without the headache of managing traditional database and data platform overhead. However, the reality today is that Apache Kafka is most commonly partnered with big data and NoSQL backends. Cloud offers some promising opportunities for building lighter-weight application backends, which is why we saw an increase in the amount of practitioner focused talks on popular cloud-managed services like Azure Data Lake and Google Cloud.

Why StreamSets for Kafka?

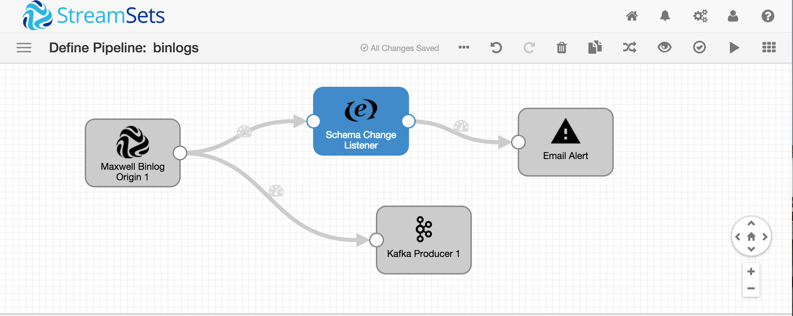

StreamSets helps customers ease the the burden of hand coding Apache Kafka pipelines. More than just a supported source and destination, Kafka has become a canonical component of modern pipelines that need to scale across the business. Our customers can utilize Apache Kafka via a visual interface and deploy those pipelines systematically for multiple workloads. This helps companies expand the scale of stream processing jobs and monitor and deploy data streams intelligently. The business implications are that companies can scale their data teams efficiently and operate continuously across their enterprise.

However, for most organizations, the data they need to deliver to analysts and data scientists is not limited to pipelines leveraging Apache Kafka. Which is why StreamSets built a DataOps platform that leverages the best things about Apache Kafka while addressing a myriad of data pipelining requirements. DataOps is the only way to ensure success in having a strategic approach to data pipelines, comprehensively.

By our estimation, Kafka will continue to be a central part of the streaming data solution space, so let’s take a look at some of the exciting things on the horizon for Kafka.

Common Topics at Kafka Summit

Real-time Applications

As companies aim to move beyond the limitations of batch processing, real-time stream processing bursts with new business value. Often a highly customized effort, with common open source tooling many users can design applications that can leverage streaming data from day one, a considerably easier task than re-engineering a batch application. StreamSets customers get the best of the open-source ecosystem alongside the tools they need to meet real enterprise requirements for security, governance, and scale. Check out why One Click Retail built their real-time application with StreamSets.

Real-time Events

For event-driven architectures, messaging is far more than a simple pipe that connects systems together. Part messaging system, part distributed database, streaming systems let you store, join, aggregate, and reform data from across a company before pushing it wherever it is needed. When combined with public clouds, where infrastructure can be provisioned dynamically, the result makes data entirely self-service. When it comes to real-time events, at StreamSets you have options. You can leverage Spark Streaming or a variety of event-messaging systems and still get the best results from your Kafka events.

Kubernetes

There is no ignoring the rise in focus for dynamic, containerized workloads. Kubernetes is a portable, extensible open-source platform that facilitates configuration and automation of functional software environments. The draw of Kubernetes comes from its fairly simple operation and its ability to run reliably at scale. Basically, Kubernetes lets you run your applications and services anywhere. Kafka enables you to make your data accessible instantaneously, anywhere. StreamSets Data Collector can run via Kubernetes StatefulSets and as a Kubernetes Deployment. Recently, StreamSets Control Hub added a Control Agent for Kubernetes that supports creating and managing Data Collector deployments and a Pipeline Designer that allows designing pipelines without having to install Data Collectors.

Cloud

Kafka was born with the core tenants around scaling to meet the needs of streaming data. The public cloud gives users the flexibility to dynamically scale infrastructure to meet these demands. Cloud architecture patterns allow users to build very lean environments to support their streams. This is evident when looking at the variety of cloud companies that have released Kafka-as-a-Service offerings. These products give developers easy access to start working with Kafka, but as workloads evolve, it often forces reliance on other managed cloud services for storage, network, and compute. This culminates in a bunch of purpose-built projects that may address a need, but don’t help define a strategy that spans cloud and on-premises. StreamSets helps you design pipelines with Apache Kafka and deploy them anywhere.

Where to find out more about Kafka and StreamSets?

We want our customers to have the tools and knowledge to make their streaming applications a success. We are happy to help you make adoption of Apache Kafka a breeze for your company. Contact us today for personal meeting.